10 Market Research Best Practices for Analysts in 2026

Level up your workflow with these 10 market research best practices. Learn how to work smarter with AI and automation to deliver faster, more reliable insights.

As an analyst or demand-generation specialist, you're on the front lines, tasked with transforming raw data into strategic insights. You’ve mastered the fundamentals of market research, but the tools and data sources are advancing rapidly. This presents a new challenge and a significant opportunity. Success is no longer just about diligence; it’s about achieving velocity and scale in your analysis.

This guide isn't a lecture on the basics you already know. It's a playbook for upgrading your workflow, helping you transition from manual, row-by-row analysis to scalable, repeatable processes that generate superior insights in a fraction of the time. We will explore 10 essential market research best practices specifically designed for the modern analyst. The focus is on practical implementation, demonstrating how to leverage automation and AI-enrichment tools to work smarter, not just harder.

Throughout this listicle, we’ll cover everything from defining clear objectives and structuring data collection to implementing automated batch processing with tools like Row Sherpa. You will learn how to build robust validation protocols, manage asynchronous jobs for large datasets, and standardize your documentation for future projects. The goal is to provide actionable steps that move beyond traditional spreadsheet-based methods, enabling you to deliver more impactful results with greater efficiency. Let's dive into the practices that will help you scale your expertise and accelerate your career.

1. Defining Clear Research Objectives and Hypotheses

One of the most crucial market research best practices is establishing clear objectives and testable hypotheses before a single data point is collected. You already know this is foundational, but in the age of AI, it takes on a new meaning. It's the step that ensures every subsequent action—and every dollar spent on processing—is purposeful and directly contributes to answering your most critical business questions. Instead of boiling the ocean with broad queries, you define precisely what you need to learn and what specific decisions the findings will influence.

This upfront clarity prevents scope creep and guarantees that the insights you generate are directly applicable. When your research is designed to validate or disprove a specific assumption, the resulting data becomes a powerful tool for confident, evidence-based decision-making rather than a collection of interesting but ultimately unactionable facts.

Practical Implementation

For teams leveraging AI-enrichment tools like Row Sherpa, this means translating your hypotheses into precise, targeted prompts. The goal is to design instructions that command the AI to extract the exact signals you need from your raw data.

-

Venture Capital Example: A VC firm's hypothesis might be: "SaaS companies with usage-based pricing models and SOC 2 compliance are more likely to secure Series A funding." Their objective is to screen 500 startups to identify the top 20 that fit this thesis. The corresponding Row Sherpa prompt would be designed to scan company websites and funding data to explicitly extract "Pricing Model" and "Security Certifications," directly mapping the output to their investment criteria.

-

Sales Operations Example: A RevOps team might hypothesize that companies mentioning "supply chain optimization" on their website are high-intent leads for their logistics software. The objective is to enrich 10,000 CRM records with this intent signal. Their prompt would instruct the AI to analyze each company's services page and return a simple "Yes/No" or a relevant quote if the key phrase is found.

Actionable Tips

-

Document and Align: Before launching any data processing job, formally document your hypotheses. This creates a single source of truth that keeps cross-functional teams aligned.

-

Map Prompts to Decisions: Design your AI prompts so the output fields directly inform the decision at hand. If you need to know if a company is a "good fit," your prompt should extract the specific attributes that define that fit.

-

Leverage Prompt Management: Use tools like Row Sherpa’s prompt-saving feature to lock in your research objectives. This ensures consistency and repeatability across large batch-processing workflows, eliminating variability between different analysts or runs.

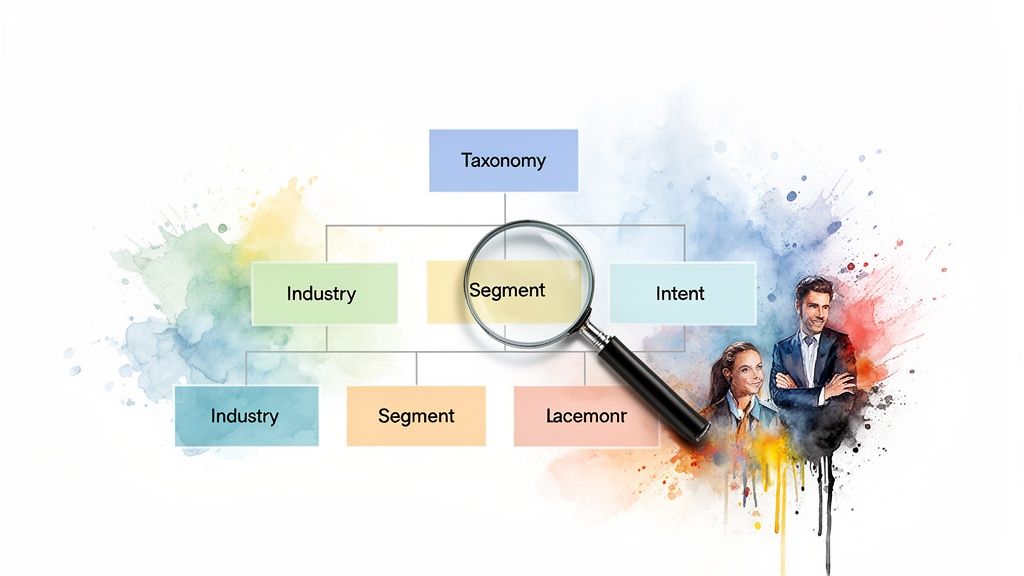

2. Structured Data Collection with Consistent Taxonomies

Another cornerstone of effective market research best practices is implementing a standardized classification system, or taxonomy, for all collected data. This approach brings structure to qualitative insights, ensuring they can be aggregated, compared, and analyzed systematically at scale. Instead of having different analysts interpret and categorize data in unique ways, a consistent taxonomy provides a predefined set of rules and hierarchies that guarantees comparability across thousands of data points.

This discipline is critical for teams extracting entities like intent signals, sentiment, or specific product features from unstructured sources. It transforms subjective observations into quantifiable metrics, allowing you to spot trends and patterns that would otherwise be lost in the noise of inconsistent, ad-hoc labeling.

Practical Implementation

For analysts running large-scale data enrichment projects, a consistent taxonomy is the key to producing reliable, high-quality output. When using an AI-enrichment platform like Row Sherpa, this means designing your prompts to force the AI to classify information according to your predefined structure.

-

VC Analyst Example: An investment team needs to score 200+ companies based on a deal quality framework. Their taxonomy might include categories like "Team Strength" (Ex-FAANG, Repeat Founder, etc.) and "Market Positioning" (Niche Leader, Challenger, etc.). The prompt would instruct the AI to read a founder's LinkedIn profile and assign a value only from that predefined list, ensuring every company is scored on the exact same criteria.

-

Market Research Example: An insights team is analyzing 5,000 customer support tickets to identify product feedback. Their taxonomy defines intent categories like "Bug Report," "Feature Request," and "Billing Inquiry." The prompt is designed to classify each ticket into one of these buckets, providing a clean, quantitative view of customer issues.

Actionable Tips

-

Enforce Structure with JSON: Use Row Sherpa's JSON output validation to force the AI to adhere to your strict taxonomy. This eliminates classification errors and ensures every row is perfectly structured for analysis.

-

Test on a Small Sample: Before running a full batch job on thousands of rows, test your taxonomy on a small sample of 20-30 data points to identify any ambiguities or missing categories.

-

Document Your Definitions: Create a shared document that clearly defines each category in your taxonomy with examples. This ensures every team member interprets the classification rules consistently.

-

Reuse Prompts for Consistency: Save your finalized, taxonomy-enforcing prompts in Row Sherpa to maintain perfect consistency across all future research and data enrichment workflows.

3. Leveraging Multiple Data Sources and Triangulation

Relying on a single data source, no matter how reliable, creates blind spots. A core component of modern market research best practices is data triangulation: the strategic process of combining and cross-referencing information from multiple sources. This approach validates findings, reduces the inherent bias of any single dataset, and paints a much richer, more accurate picture of the market landscape.

By weaving together internal data (like CRM records or past research) with external signals (like public web data or industry databases), you build stronger, more defensible conclusions. When different sources all point to the same insight, your confidence in that insight grows exponentially, transforming a simple data point into a validated strategic asset.

Practical Implementation

For teams using tools like Row Sherpa, triangulation is no longer a manual, time-consuming task. You can programmatically enrich your primary dataset with real-time external information, creating a unified and more powerful source of truth directly within your workflow.

-

VC Analyst Example: An analyst might cross-reference their internal due diligence notes on a startup with web-sourced data on recent leadership hires, market positioning found in press releases, and product reviews. A prompt can instruct the AI to perform a live web search for these signals, adding new columns to their existing spreadsheet and instantly validating or challenging their initial thesis.

-

Market Researcher Example: A researcher can augment quantitative survey responses with qualitative sentiment data scraped from online forums, social media, and product review sites. The AI can be tasked to search for mentions of a specific product feature and categorize the public sentiment as "Positive," "Negative," or "Neutral," adding a crucial layer of context to their survey results.

Actionable Tips

-

Prioritize and Layer: Identify your primary internal dataset, then strategically layer on external sources that will best fill its gaps. Not all sources are equal, so prioritize them based on relevance and reliability.

-

Document Your Sources: For every key insight, maintain a clear record of which data sources were used to derive it. This transparency is crucial for defending your conclusions and ensuring analytical rigor.

-

Flag Contradictions: Design your process to automatically flag significant discrepancies between sources. A contradiction isn't a failure; it's an opportunity for deeper investigation and a more nuanced understanding.

-

Use Web-Search Augmentation: Leverage features like Row Sherpa's web search to seamlessly pull real-time external signals into your existing CSVs. This automates the triangulation process, allowing you to enrich thousands of records at once.

4. Automated Batch Processing for Consistency and Scale

Manual data review is a significant bottleneck in modern market research. One of the most impactful market research best practices is implementing automated batch processing, which applies a consistent set of rules or analysis criteria across thousands of records simultaneously. This approach eliminates the variability and human error inherent in manual review, ensuring every data point is evaluated using the exact same logic. By doing so, you can dramatically accelerate time-to-insight and scale your research efforts without compromising quality.

Automating this process transforms large-scale data enrichment and categorization from a multi-day task for a team of analysts into an efficient, repeatable workflow. This frees up valuable analyst time from monotonous tasks, allowing them to focus on higher-level strategic analysis and interpretation of the results.

Practical Implementation

Using an AI-enrichment tool like Row Sherpa, you can operationalize this practice by uploading a CSV and applying a single, well-defined prompt to every row. This ensures uniform data processing, whether you're analyzing a hundred records or a hundred thousand. Learn more by building a batch process for your CSV with LLMs.

-

Sales Operations Example: A sales ops team can process a list of 5,000 new leads in minutes. A single automated job can enrich their CRM with crucial data points like industry, company size, and an initial "fit" assessment based on website content, preparing the list for immediate sales outreach.

-

Venture Capital Example: An analyst can screen 500 target companies against a complex investment thesis overnight. The batch job consistently applies the firm's criteria, such as identifying specific technologies or market positioning, to surface the most promising opportunities by morning.

-

Market Research Example: A team can categorize 10,000 unstructured customer feedback entries by sentiment, product feature, and theme in a single job. This provides a structured, quantitative overview of qualitative data that would be impossible to achieve manually at scale.

Actionable Tips

-

Start with a Pilot Batch: Before processing thousands of rows, test your prompt on a small sample (20-50 rows) to validate the logic and review output quality.

-

Use Asynchronous Processing: Launch large jobs to run in the background or outside of working hours. This maximizes efficiency without interrupting other critical tasks.

-

Build a Feedback Loop: Systematically review a sample of outputs from each batch job. Use these insights to iteratively refine your prompts for better accuracy and relevance over time.

-

Save and Reuse Prompts: Utilize features like Row Sherpa's prompt library to save and reuse your validated prompts. This ensures consistency across different datasets and research projects.

5. Segmentation and Cohort Analysis

A powerful market research best practice is to move beyond aggregated data by implementing segmentation and cohort analysis. This involves dividing your dataset into meaningful subgroups based on shared characteristics like company size, industry, or customer stage. Analyzing patterns within these segments reveals nuanced insights that are often obscured in a homogenized view, preventing you from applying a one-size-fits-all strategy to a diverse market.

This granular approach ensures your findings are highly relevant and actionable for specific target audiences. Instead of generating broad conclusions, you can identify precisely how needs, behaviors, and opportunities differ across various customer types or market segments, leading to more targeted and effective strategies.

Practical Implementation

For teams using AI enrichment to scale their research, segmentation can be built directly into the data extraction workflow. The key is to instruct the AI to not only find your primary data points but also to extract the criteria needed to segment the results effectively.

-

Sales Operations Example: A RevOps team wants to understand which firmographic signals predict conversion best for different company sizes. When enriching CRM data, their Row Sherpa prompt would extract key signals (e.g., "mentions 'API integration'") and simultaneously extract "Employee Count" and "Annual Revenue." This allows them to later filter the results to see which signals correlate highest with closed-won deals for SMBs versus enterprise clients.

-

VC Analyst Example: An analyst needs to apply different thesis-screening criteria to startups at various stages. Their prompt can be designed to first identify the company's funding stage (Seed, Series A, Growth) and then apply conditional logic or separate analysis based on that cohort. This ensures that seed-stage companies are not unfairly compared against the metrics expected from a growth-stage firm.

Actionable Tips

-

Extract Segments Upfront: Use your AI enrichment tool, like Row Sherpa, to extract segmentation criteria (industry, stage, geography) in the same batch job as your primary analysis. This saves time and ensures data consistency.

-

Define Segments Pre-Analysis: Formally define your cohort criteria before running any jobs. This guarantees consistent categorization across different datasets and over time, making longitudinal analysis possible.

-

Ensure Statistical Significance: Aim for a sufficient number of observations, typically 30 or more, within each segment to ensure your conclusions are meaningful and not based on random noise.

-

Track Segments Over Time: Continuously monitor how insights and trends evolve within each key segment. This can reveal emerging market shifts or changes in customer behavior that represent new opportunities or risks.

6. Iterative Testing and Refinement of Research Methodology

A common mistake in large-scale data projects is assuming the initial methodology is perfect. A core tenet of modern market research best practices is embracing an agile, iterative approach. Instead of running a flawed analysis across your entire dataset, you first test your hypotheses and methods on a small, manageable sample. This allows you to review initial results, identify weaknesses, and refine your approach before committing significant time and resources.

This "pilot test" philosophy significantly reduces the risk of generating inaccurate or irrelevant insights. By validating your methodology on a small scale, you ensure that when you finally process thousands or tens of thousands of records, the output is robust, reliable, and directly aligned with your objectives.

Practical Implementation

For analysts using AI-enrichment tools like Row Sherpa, this means rigorously testing your prompts on a small batch of data. The goal is to catch edge cases, misunderstandings by the AI, and formatting errors early, allowing you to fine-tune your instructions for maximum accuracy before scaling up.

-

Venture Capital Example: An analyst needs to screen 1,000 companies for a specific GTM motion. Before processing the full list, they test their prompt on 50 companies where the outcome is already known. They discover the AI misinterprets "partner-led" for "sales-led" in certain contexts, allowing them to refine the prompt's instructions to be more explicit, thus preventing a large-scale error.

-

Market Researcher Example: A researcher is using AI to classify 5,000 open-ended survey responses into sentiment categories (Positive, Neutral, Negative, Mixed). They first run a pilot on 100 responses and manually check the AI’s work. They find "Mixed" is often mislabeled, so they add specific examples and definitions to their prompt to improve its classification accuracy for the full run.

Actionable Tips

-

Always Start with a Pilot Batch: Before launching a large job in Row Sherpa, process a pilot batch of 20-50 rows. This is your chance to validate the prompt's logic and output quality.

-

Manually Review Pilot Outputs: Randomly sample 20-30 outputs from your pilot run and check them by hand. This qualitative check is crucial for identifying subtle errors or edge cases the AI might be missing.

-

Iterate and Document: Refine your prompt based on the pilot results. Document each version and the rationale for the changes so your team can understand how the final methodology was developed.

-

Benchmark Accuracy: Calculate a simple accuracy metric on your pilot results (e.g., % of entries correctly categorized) to create a quality benchmark before proceeding with the full dataset.

7. Validation and Quality Assurance Protocols

One of the most essential market research best practices is establishing systematic validation and quality assurance (QA) protocols. This step ensures that your research results, whether generated manually or through automation, are accurate, reliable, and trustworthy for decision-making. It involves a structured process of spot-checking outputs, comparing results against known benchmarks, and flagging anomalies before the data is put to use.

When leveraging AI-powered tools for large-scale data enrichment, this validation becomes non-negotiable. An error in a prompt or data source can compound across thousands of records, turning a minor issue into a major data integrity crisis. A rigorous QA process acts as a critical failsafe, safeguarding the quality of your insights and the strategic decisions they inform.

Practical Implementation

For teams using an AI enrichment platform like Row Sherpa, validation means treating automated outputs with professional skepticism. The goal is to verify that the AI's interpretations and extractions align perfectly with human understanding and the source material before accepting the results wholesale.

-

Sales Operations Example: A sales ops team enriches 10,000 CRM contacts with industry and employee count data. Before importing, they spot-check a random sample of 50 records, manually verifying the AI-generated classifications against each company's LinkedIn profile to ensure accuracy and prevent mis-segmentation.

-

Venture Capital Example: An analyst uses an AI tool to screen 1,000 pitch decks for specific traction metrics. To validate the model's performance, they compare the automated outputs for 20 known companies (both funded and unfunded) against their own prior analysis to confirm the tool correctly identified the key performance indicators.

-

Market Research Example: A research team automates sentiment analysis on 5,000 customer reviews. They first have a senior analyst manually code 100 reviews. They then compare the AI's classifications against this "gold standard" set to calculate an accuracy score and determine if the model is reliable enough for the full dataset.

Actionable Tips

-

Create a Validation Checklist: Document the specific criteria for a "correct" output. For CRM enrichment, this might include verifying company name, industry, and location against an official source. This process is a core component of effective data cleaning best practices.

-

Sample, Don't Skim: Always review a meaningful percentage (e.g., 5-10%) of your automated outputs manually. Random sampling is more effective than just checking the first few rows.

-

Track Accuracy Over Time: Calculate and log accuracy metrics for each research job. A decline in accuracy can signal that a prompt needs refinement or a data source has changed.

-

Flag and Isolate Errors: Tag any rows that fail validation. This allows you to decide whether to exclude them, correct them manually, or refine your prompt and rerun the job on the failed subset.

8. Real-Time Monitoring and Asynchronous Job Management

In large-scale research projects, efficiency is paramount. A key market research best practices principle is leveraging asynchronous processing, which allows you to launch massive data jobs without tying up your computer or your time. Instead of watching a progress bar for hours, you can initiate a task, move on to other priorities, and receive the results when the job is complete. This method is a game-changer for managing concurrent projects and maximizing analyst productivity.

This "fire-and-forget" approach transforms how teams handle high-volume data enrichment and analysis. It allows you to schedule resource-intensive tasks for off-peak hours, like overnight or on weekends, ensuring that large datasets are ready for analysis at the start of the next business day without disrupting daily workflows.

Practical Implementation

Platforms architected for asynchronous workflows, like Row Sherpa, are built to handle this at scale. The system processes your request in the background, freeing your local machine and your attention for more strategic work. You can monitor job status in real-time and access the completed files from a centralized history log whenever you're ready.

-

Sales Operations Example: A sales ops team launches a 50,000-row CRM enrichment job on a Friday evening. The job runs over the weekend, and the team returns on Monday morning to a fully enriched dataset, ready for lead scoring and territory assignment without losing a single hour of productivity.

-

VC Analyst Example: An analyst schedules daily screening jobs to automatically process a feed of new startup submissions. Each morning, they review the results from the previous day's asynchronous run, allowing them to focus on high-potential companies rather than manual data entry and vetting.

-

Market Research Example: A research team queues up five separate customer feedback enrichment jobs, each containing thousands of open-ended survey responses. By running these jobs asynchronously overnight, the team can begin qualitative analysis first thing in the morning with structured, AI-tagged data.

Actionable Tips

-

Schedule During Off-Hours: Use the asynchronous architecture of a tool like Row Sherpa to launch large data processing jobs during evenings or weekends to optimize resource use.

-

Establish Clear Naming Conventions: Document job names so team members can easily find and track results (e.g., 'CRM_Enrichment_Q42024_SoftwareLeads').

-

Set Up Job Completion Alerts: Configure email, Slack, or webhook notifications to alert you or your team the moment a job is finished, ensuring no time is wasted.

-

Track Job History: Maintain a log of completed jobs to prevent duplicate work and create a reference for what data has already been processed.

9. Actionable Insight Extraction and Stakeholder Communication

Data analysis reaches its full potential only when insights are translated into concrete business actions. This critical market research best practice focuses on moving beyond raw data points to deliver clear, actionable recommendations tailored to specific stakeholders. It’s about bridging the gap between what the data says and what the business should do, ensuring your research drives tangible outcomes.

The goal is to distill complex findings into a few pivotal insights that matter most and package them for immediate consumption and action. This transforms the research function from a data provider into a strategic partner, directly influencing decisions related to product roadmaps, sales priorities, and investment strategies.

Practical Implementation

For junior analysts using AI-powered tools, this means engineering prompts to produce decision-ready outputs, not just more data to sift through. By integrating the "so what?" directly into your data enrichment process, you accelerate the path from analysis to action.

-

Sales Operations Example: A RevOps analyst hypothesizes that companies with open engineering roles for "cloud security" are prime targets for their new cybersecurity platform. Instead of just extracting job titles, they design a Row Sherpa prompt to return a "Lead Score" (High/Medium/Low) based on the presence and seniority of these roles, directly feeding a prioritized list to the sales team.

-

Market Research Example: A market research team analyzes 10,000 customer reviews using sentiment analysis. Their objective is to guide the product roadmap. The prompt is structured to not only classify sentiment but also to categorize feedback into predefined feature request buckets (e.g., "UI Improvement," "Integration Request"), providing a quantified, prioritized list for the product managers.

Actionable Tips

-

Design Prompts for Decisions: Structure your prompts to yield direct answers. Instead of extracting a company's industry, ask: "Is this company in a priority industry (Tech, Finance, Healthcare)? Yes/No." This minimizes post-processing.

-

Clarify Stakeholder Needs: Before starting, ask stakeholders: "What specific decision will this data inform?" Use their answer to frame your entire analysis and reporting.

-

Create 1-Page Summaries: Synthesize your findings into a concise executive summary. Highlight the 3-5 most critical insights and their direct business implications, making it easy for busy leaders to grasp the key takeaways.

-

Translate Insights to Next Steps: Don't just report findings; recommend actions. For example, "Our analysis shows a 40% higher engagement from fintech companies; therefore, we recommend launching a targeted Q3 marketing campaign for this vertical."

-

Track Impact: Schedule a follow-up meeting a few weeks after sharing your report to see if the insights were used and what the results were. This feedback loop is essential for improving future research projects.

10. Documentation and Process Standardization for Repeatability

A key component of sophisticated market research best practices is building a system of record for your methodologies. By documenting research processes, prompt templates, taxonomies, and findings, you create institutional knowledge. This ensures that your insights remain consistent over time, even as your team evolves. Instead of relying on individual analysts' tribal knowledge, standardized processes guarantee that research quality and comparability are maintained across quarters and years.

This practice transforms ad-hoc research projects into a scalable, repeatable program. It enables faster onboarding for new team members and ensures that anyone on the team can replicate a past analysis with confidence. This level of standardization is crucial for longitudinal studies or any ongoing research that tracks market changes, making your data more reliable for strategic planning.

Practical Implementation

For teams scaling their data enrichment and analysis efforts with tools like Row Sherpa, documentation becomes the backbone of efficiency. Standardized prompts and taxonomies ensure that data processed by different team members or at different times is directly comparable.

-

Sales Operations Example: A global sales ops team documents its standard CRM enrichment taxonomy (e.g., Tier 1/2/3 accounts, key technologies used, intent signals). They create and share a master Row Sherpa prompt across regional teams to ensure every lead is evaluated against the exact same criteria, leading to a consistent global pipeline.

-

Venture Capital Example: A VC firm maintains a library of vetted investment-thesis screening prompts. An analyst looking for "fintechs with embedded insurance APIs" can pull the firm’s approved prompt, ensuring their initial screening aligns perfectly with the fund's established methodology, saving time and reducing variability.

-

Market Research Example: A consumer insights team documents its sentiment classification taxonomy (e.g., Positive, Negative, Neutral, Feature Request) and the exact review process. This allows them to analyze product reviews year-over-year with the confidence that the classification logic has remained consistent.

Actionable Tips

-

Create a Prompt Library: Use Row Sherpa’s prompt-saving feature to build a central library. Name prompts clearly (e.g., "B2B_SaaS_Intent_Signal_Enrichment_v2") and document their use case and any limitations.

-

Write One-Page Runbooks: For each major research workflow, create a simple runbook detailing input data requirements, the specific prompt to use, validation checks, and how to interpret the output.

-

Maintain a Change Log: Keep a record of when and why taxonomies or prompts are updated. This context is invaluable for understanding historical data.

-

Store Documentation Centrally: Use a shared wiki, Notion page, or Google Doc so processes are accessible, searchable, and easy for the whole team to update.

10-Point Market Research Best Practices Comparison

| Practice | 🔄 Implementation Complexity | ⚡ Resource Requirements | 📊 Expected Outcomes | 💡 Ideal Use Cases | ⭐ Key Advantages |

|---|---|---|---|---|---|

| Defining Clear Research Objectives and Hypotheses | Medium — stakeholder alignment and scope definition | Low–Medium — upfront time; few people required | Focused, actionable insights; fewer irrelevant data points | Any study setup; VC thesis definition; prompt design | Increases alignment; reduces wasted work; clearer decision criteria |

| Structured Data Collection with Consistent Taxonomies | Medium–High — taxonomy design and governance | Medium — subject-matter input, maintenance, validation | Comparable, aggregateable data ready for analysis | Large qualitative datasets; CRM enrichment; benchmarking | Enables quantitative analysis of qualitative data; reduces bias |

| Leveraging Multiple Data Sources and Triangulation | High — integration and reconciliation logic | High — data access, compute, compliance overhead | Higher confidence in findings; broader signal coverage | Due diligence; enrichment; trend and competitive analysis | Validates results across sources; surfaces external signals |

| Automated Batch Processing for Consistency and Scale | Medium — workflow and prompt optimization | Medium — compute/token costs and initial setup | Rapid processing at scale; uniform outputs; lower per-unit cost | Mass enrichment; screening thousands of records | Eliminates manual bottlenecks; consistent application at scale |

| Segmentation and Cohort Analysis | Medium — segment definitions and statistical considerations | Medium — sufficient sample sizes and analysis tools | Segment-specific insights; targeted strategies and prioritization | Market segmentation; lead scoring; cohort-based analysis | Reveals heterogeneity; improves targeting and ROI measurement |

| Iterative Testing and Refinement of Methodology | Low–Medium — pilot design and feedback loops | Low–Medium — pilot samples, reviewer time | Reduced risk; improved accuracy before full-scale runs | New prompts/taxonomies; methodology validation | Catches flaws early; improves final methodology quality |

| Validation and Quality Assurance Protocols | Medium — sampling plans and validation frameworks | Medium–High — manual review, benchmark datasets | Reliable, auditable results; fewer operational errors | High-stakes decisions; compliance-sensitive analyses | Provides confidence; enables root-cause fixes and traceability |

| Real-Time Monitoring & Asynchronous Job Management | Low–Medium — monitoring and error-handling setup | Low–Medium — scheduling tools, notifications | Better throughput; predictable job completion; less idle wait | Nightly batches; concurrent large jobs; recurring pipelines | Frees analyst time; improves resource utilization and scheduling |

| Actionable Insight Extraction & Stakeholder Communication | Medium — synthesis and stakeholder alignment | Medium — analyst time, visualization/reporting tools | Faster decision-making; higher adoption of findings | Executive reports; product roadmaps; sales prioritization | Translates data into decisions; increases research impact |

| Documentation & Process Standardization for Repeatability | Medium — documentation effort and change management | Low–Medium — time to create and maintain runbooks | Repeatable, consistent processes; smoother onboarding | Ongoing programs; multi-analyst teams; audits | Ensures consistency; reduces onboarding time; supports audits |

Putting It All Together: From Best Practice to Daily Practice

Navigating the landscape of modern market research can feel like trying to assemble a high-performance engine while it's already running. You're expected to be precise, fast, and strategic, often all at once. The ten best practices we've explored aren't just a theoretical checklist; they represent a fundamental operational upgrade. They are the blueprints for transforming your research function from a reactive data-gathering service into a proactive, strategic intelligence hub that drives critical business decisions.

The core thread weaving through these principles is the shift from manual, one-off projects to scalable, repeatable systems. By establishing clear objectives, building structured taxonomies, and validating your data sources, you lay the groundwork for reliability. Embracing iterative testing, segmentation, and robust documentation ensures your methods evolve and improve, project after project. This systematic approach is the bedrock of all impactful market research.

The True Value of Adopting These Market Research Best Practices

Mastering these concepts is about reclaiming your most valuable asset: your time for strategic thinking. The goal isn't just to produce better reports; it's to elevate your role. When you automate batch processing, implement real-time monitoring, and standardize your workflows, you systematically eliminate the bottlenecks and tedious manual labor that consume your day. This frees you from the drudgery of data wrangling and allows you to focus on the high-value work that truly matters: interpreting complex signals, uncovering non-obvious opportunities, and translating raw data into compelling narratives that stakeholders can act upon.

Think of it as the difference between being a data technician and a data strategist. A technician executes tasks. A strategist designs systems, anticipates needs, and delivers insights that shape the future of the business. By embedding these market research best practices into your daily operations, you build a powerful, efficient engine that handles the heavy lifting, empowering you to take the driver's seat.

Your Actionable Roadmap for Implementation

Adopting this entire framework overnight is unrealistic. The key is to start small and build momentum. Here's a practical way to begin integrating these practices into your workflow:

-

Select Your Starting Point: Pick one or two practices that address your most significant pain point right now. Is your data collection chaotic? Start with Structured Data Collection with Consistent Taxonomies. Are you drowning in manual CSV work? Focus on Automated Batch Processing for Consistency and Scale.

-

Pilot on a Single Project: Apply your chosen practice(s) to your very next research project. Treat it as a pilot program. Document your process, the tools you use, and the time you save. Measure the improvement in data quality or the speed of insight delivery.

-

Showcase Your Wins: Don't keep your success a secret. Share your results with your manager and team. A simple "We processed this data set 70% faster by automating our cleaning process" or "Consistent taxonomies allowed us to merge three data sets without manual rework" is a powerful testament to the value of working smarter.

-

Iterate and Expand: Once you've proven the value of one practice, choose another to integrate. Gradually, you will build a comprehensive, interconnected system where each best practice reinforces the others, creating a compounding effect on your team's efficiency and impact.

By methodically building these habits, you don’t just improve a single project; you create a resilient, scalable research operation. This journey transforms market research from a series of disjointed tasks into a streamlined, strategic function that consistently delivers a competitive advantage. You become the architect of a smarter, more agile insights engine for your entire organization.

Ready to stop wrestling with CSV files and start focusing on strategy? Row Sherpa is the AI-powered data enrichment and automation platform designed to implement these market research best practices at scale. Turn your messy data into structured, actionable insights in minutes by visiting Row Sherpa to see how you can automate your research workflows today.