AI for Data Analysis A Practical Guide to Transforming Insights

Unlock the power of AI for data analysis. This practical guide explores proven techniques, real-world use cases, and tools that turn raw data into revenue.

So, what do we actually mean by "AI for data analysis"?

Think of it less as a tool and more as a superpower for your team. It's about using algorithms to sift through mountains of raw data, spot patterns, make predictions, and handle the kind of complex work that would take a human analyst weeks, if not months. It’s like having an analyst who can read and understand millions of data points in an instant, pulling out insights you didn't even know were there.

From Manual Work To Intelligent Automation

Imagine trying to manually categorize 100,000 customer survey responses. The whole process would be painfully slow, riddled with human error, and wildly inconsistent. By the time you finished, the data would probably be stale anyway.

This is exactly the problem modern AI for data analysis solves. It shatters the old limits of manual grunt work and traditional software.

Instead of just matching keywords or following rigid rules, AI—especially the Large Language Models (LLMs) we have today—can interpret context, nuance, and even intent. It can make sense of unstructured text, numbers, and complex business logic at a massive scale. This finally lets businesses apply consistent, intelligent analysis across datasets that were previously just too big or messy to touch.

The Core Business Problem AI Solves

At its heart, AI for data analysis closes a huge gap every business faces: the chasm between the data you have and the answers you need.

Most teams are drowning in information—from CRMs, product reviews, market reports, you name it. The problem isn't a lack of data; it's the sheer impossibility of processing it all efficiently.

AI steps in by offering:

-

Scalability: Analyze thousands or even millions of rows in minutes, not days.

-

Consistency: Apply the exact same logic to every single data point, without getting tired or biased.

-

Depth: Uncover the subtle patterns and correlations that are completely invisible to the human eye.

The real power here is that AI acts as a force multiplier. It takes over the repetitive, soul-crushing tasks, which frees up your experts to focus on what they do best: strategy, decision-making, and creative problem-solving.

This shift from manual to automated analysis is a massive change. Let's break down the key differences.

Traditional Data Analysis vs AI-Powered Analysis

The old way of doing things was linear and labor-intensive. You'd pull data, clean it by hand, run some predefined reports, and hope you found something useful. It was slow and couldn't handle the messy, unstructured data that holds the most valuable insights. AI flips that model on its head.

| Aspect | Traditional Methods | AI for Data Analysis |

|---|---|---|

| Speed & Scale | Slow, manual processing. Limited by human bandwidth. | Extremely fast. Can process millions of records in minutes. |

| Data Types | Primarily handles structured data (clean spreadsheets). | Excels at unstructured data (text, reviews, reports). |

| Consistency | Prone to human error, bias, and fatigue. | Highly consistent; applies the same logic every time. |

| Insight Depth | Relies on known queries and predefined rules. | Discovers hidden patterns and predictive insights. |

| Automation | Minimal. Requires constant human intervention. | Highly automated, from data cleaning to insight generation. |

| Cost | High labor costs for repetitive tasks. | Lowers operational costs by automating manual work. |

The move to AI isn't just an upgrade; it’s a fundamental change in how we find answers in our data. It's about moving from reacting to what happened yesterday to predicting what will happen tomorrow.

This evolution is powering huge economic growth. The global AI market is projected to explode from USD 375.93 billion in 2026 to over USD 2,480.05 billion by 2034, mostly driven by productivity gains in data-heavy industries. You can dig into the numbers in this market expansion report from Fortune Business Insights.

Platforms like Row Sherpa and others are part of a new wave of no-code, AI tools built to make this power accessible, turning what used to be a complex data engineering problem into a straightforward, automated workflow for any team.

The Core AI Techniques Driving Modern Analysis

To really get what AI for data analysis can do, we need to pop the hood and look at the specific techniques doing the heavy lifting. Don't think of these as fuzzy academic concepts, but as specialized tools in an AI's toolkit. Each one is built for a particular kind of job.

These techniques are the engine behind automated insights. They’re what allow a machine to read, understand, and structure information from raw text with a speed and consistency no human team can match.

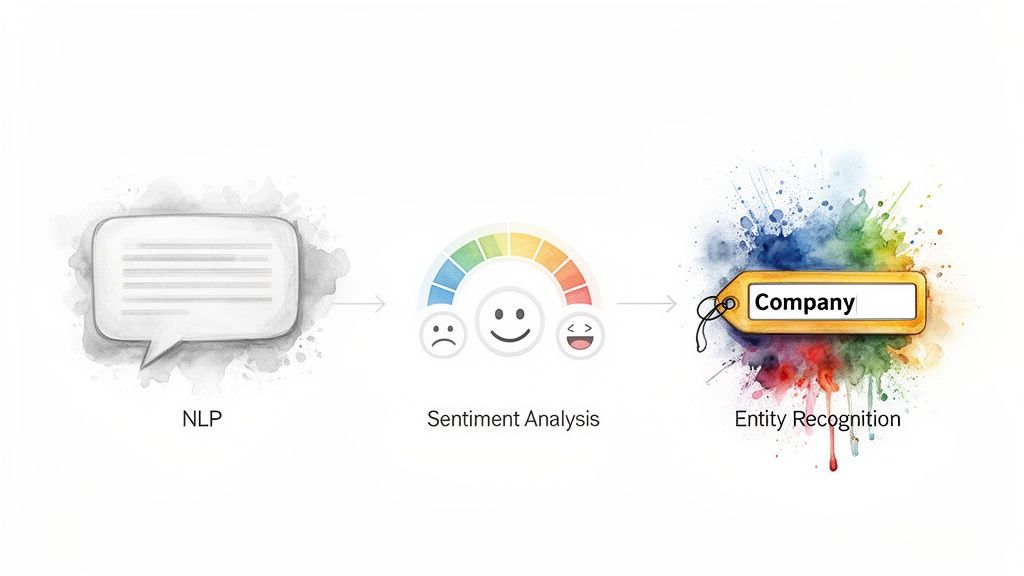

Natural Language Processing

At the very center of it all is Natural Language Processing (NLP). This is the bedrock technology that gives AI the ability to make sense of human language. It’s the difference between a machine just matching keywords and one that actually understands context, grammar, and intent.

For instance, a sales ops team could use NLP to automatically sort through thousands of inbound support tickets. Instead of a person reading every single message, the AI gets whether a ticket is about a "billing issue," "technical bug," or "feature request" and sends it to the right place. An entire manual triage process becomes an automated workflow.

Sentiment Analysis

Building on top of NLP, sentiment analysis is how an AI figures out the emotional tone behind a piece of text. It reads the language and classifies it as positive, negative, or neutral, giving you a quick pulse check on what customers or the market are thinking.

Imagine you just launched a new product and got 5,000 online reviews in the first week. Reading them all manually is a non-starter. But an AI model can score every single one instantly. This gives your product team a live dashboard of customer satisfaction and immediately flags critical feedback that needs a response.

Entity Recognition

Finally, Named Entity Recognition (NER) works like a super-powered search function for specific data points you care about. It scans text and pulls out predefined categories of information—the "entities"—that are most valuable for your analysis.

These entities can be anything you need them to be:

-

Company Names: To identify which competitors are mentioned in market reports.

-

Locations: To extract cities or countries from customer feedback and spot regional trends.

-

Product SKUs: To pull specific product codes from unstructured order notes.

When you put these techniques together, you get a seriously powerful analytical system. An AI platform can read a company’s website, use entity recognition to pull its industry, apply sentiment analysis to gauge its brand voice, and deliver a perfectly structured, enriched lead profile.

This combination is what makes modern AI for data analysis so effective. It’s not just one technology but a layered approach that turns messy, unstructured text into clean, actionable data that’s ready for your next big decision. Platforms like Row Sherpa package these capabilities into a simple, no-code interface, making this level of analysis accessible to any business team.

How Winning Teams Use AI for Data Analysis

Theory is one thing, but you only really get AI's value when you see it in the wild. Top-tier teams aren’t just running experiments; they’re plugging AI directly into their core operations to work faster, spot opportunities, and just plain make smarter calls. These aren’t sci-fi concepts—they're practical, money-making applications happening right now.

Let's walk through three common scenarios where AI flips tedious manual work into a genuine strategic edge. Each one shows a clear "before" and "after," so you can see the impact for yourself.

Use Case 1: CRM and Lead Enrichment

The old way: A sales ops team pulls a list of 1,000 new leads from a webinar. An analyst then starts the soul-crushing task of manually researching each one. They spend days digging through websites, trying to figure out a company's industry, size, and if they're even a decent fit. The process is slow, wildly inconsistent, and full of guesswork. The result? A pile of low-quality leads that waste sellers' time.

The new way: The team uploads that same list to an AI platform. In minutes, the system is already at work, visiting each company's website, reading the content, and pumping structured data back into the CRM.

-

Categorization: It reads the website's text and assigns a precise industry tag. No more "Software" vs. "SaaS" vs. "Tech."

-

Scoring: It automatically scores every lead against the company's Ideal Customer Profile (ICP).

-

Enrichment: It pulls out key details like employee counts or specific technologies the company uses.

What comes out is a perfectly enriched and prioritized list. The sales team can finally stop digging and start talking to prospects who actually matter.

Use Case 2: Automated Deal Screening

The old way: A venture capital firm is flooded with thousands of inbound applications from startups. Junior analysts are tasked with sifting through a mountain of pitch decks and websites, trying to find the few that match the firm’s very specific investment thesis. It’s a massive bottleneck, and you just know good deals are slipping through the cracks from sheer fatigue and human error.

The new way: The firm feeds a custom prompt—one that perfectly captures its investment thesis—into an AI model. Then it runs a list of 5,000 startups through the system.

The AI acts like a tireless junior analyst. It reads every single company description and website, scoring each one's alignment with the thesis. It then flags the top 50 most promising companies and provides a structured summary explaining why each one is a good match.

Partners get to skip the noise entirely and focus their due diligence on a pre-vetted, high-quality shortlist.

Use Case 3: Scalable Market Research

The old way: A product team gets 10,000 open-ended survey responses about a new feature. To make sense of it all, multiple people have to read every single response and manually tag it based on a predefined list of topics. The tagging is inconsistent from person to person, the process takes a week or more, and critical product decisions are left waiting.

The new way: The team uploads the raw survey text to an AI data tool and gives it the same taxonomy in a simple prompt. The AI gets to work, reading every response and applying the right tags with perfect consistency. In less than an hour, the team has a clean, structured dataset that clearly shows which themes are popping up the most.

This kind of rapid, scalable analysis is exactly why the AI software market is booming. The global valuation is on track to hit USD 390.91 billion by 2025, driven by the massive demand for platforms that solve these exact problems without requiring a data science team. You can dive deeper and explore the full market research on AI growth from Grand View Research to see the numbers for yourself.

Choosing Your AI Toolkit: Build vs. Buy

Once you see the potential of AI for data analysis, the next question is always the same: how do you actually get started? You've really only got two paths. You can either build a custom solution from the ground up or you can subscribe to a ready-made SaaS platform.

Each approach has its trade-offs, and the right choice boils down to your team's resources, timeline, and technical chops. Going the in-house route gives you total control, but it's a slow, expensive, and demanding commitment that requires a dedicated team of data scientists and engineers.

For most businesses, especially those without a deep bench of AI specialists, a SaaS platform offers a much more direct route to getting value from your data. It puts powerful tools into the hands of operations professionals and analysts, no coding required.

The In-House Build Path

Opting to build your own AI data analysis tool is a serious undertaking. It means significant upfront investment in both talent and infrastructure. You’ll need data scientists to develop the models, engineers to build and maintain the entire system, and a long runway for development and testing before you see any results.

While this path gives you maximum customization, it also saddles you with permanent responsibilities. You're on the hook for everything from model updates and bug fixes to scaling the infrastructure as your data volume grows. It’s a powerful option, but often totally impractical for teams that need answers now, not next year.

The SaaS Platform Path

In contrast, SaaS platforms like Row Sherpa are built for speed and accessibility. These tools wrap complex AI capabilities into a user-friendly interface, letting non-technical users run sophisticated data enrichment and analysis jobs in minutes. The focus shifts from building the engine to just using it to get the job done.

This approach offers some huge advantages:

-

Speed to Market: You can start processing data on day one instead of waiting months (or years) for an internal build.

-

Lower Upfront Cost: A predictable subscription fee replaces the massive capital expense for salaries and infrastructure.

-

Zero Maintenance: The vendor handles all the updates, security, and technical upkeep so you don't have to.

-

Predictable Results: Platforms are engineered to deliver consistent, scalable outputs, solving the unreliability of ad-hoc LLM prompts on large datasets.

The explosive growth of AI is pushing more and more companies toward this model. Recent reports show that AI adoption has surged to 72% as businesses hunt for code-free tools to handle tasks like CRM enrichment and deal screening. You can dive deeper into these trends with these critical AI statistics for business leaders.

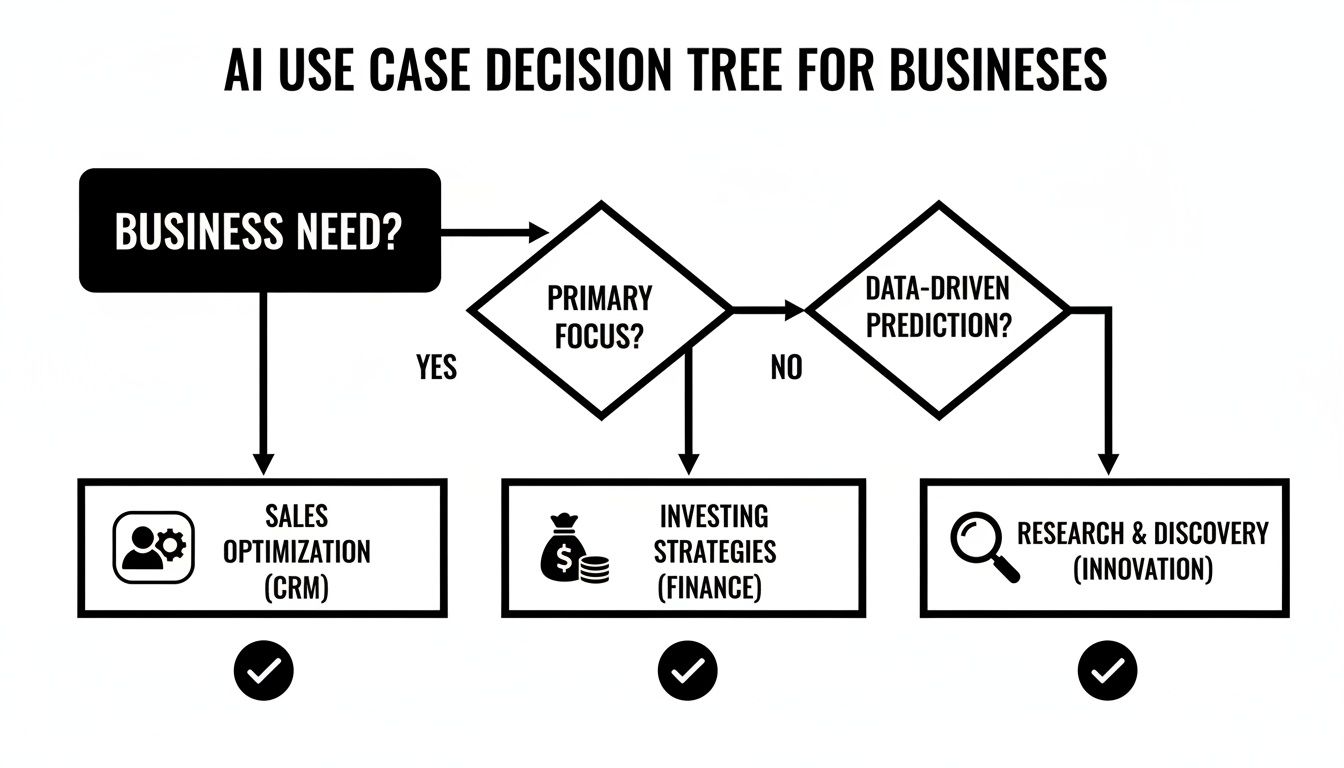

This decision tree helps visualize how different business needs map to specific AI use cases that platforms can solve right out of the box.

Whether you’re in sales, investing, or research, there's a clear, platform-driven path to solving your specific data challenges with AI.

Implementation Models In-House AI vs SaaS Platform

The choice between building and buying isn't just about cost—it's about where you want your team to focus their energy. This table breaks down the practical considerations to help you decide which path makes the most sense for your goals.

| Consideration | In-House Build | SaaS Platform (e.g., Row Sherpa) |

|---|---|---|

| Upfront Cost | High: Requires salaries for engineers and data scientists, plus infrastructure costs. | Low: Predictable subscription fee, often with a free tier to start. |

| Time to Value | Slow: Months to years for development, testing, and deployment. | Immediate: Start running analysis jobs on day one. |

| Required Expertise | Deep: Needs a dedicated team of AI/ML engineers and data scientists. | Minimal: Designed for business users, analysts, and ops professionals. No code required. |

| Ongoing Maintenance | High: Your team is responsible for all updates, bug fixes, and security patches. | None: The platform vendor handles all maintenance and infrastructure management. |

| Scalability | Complex: Requires significant engineering effort to scale with data volume. | Built-in: Architected to handle large datasets and batch processing without user intervention. |

| Flexibility | Maximum: Fully customizable to unique, proprietary workflows. | High (within platform): Configurable for specific use cases but constrained by the tool's features. |

| Risk | High: Project can fail due to technical challenges, budget overruns, or staff turnover. | Low: Proven technology, predictable costs, and easy to switch if it doesn't meet needs. |

Ultimately, building in-house only makes sense if your use case is so unique that no existing tool can solve it and you have the long-term resources to support a custom software product. For everyone else, a SaaS platform provides a faster, cheaper, and more reliable way to get the job done.

Common Mistakes to Avoid

Jumping into AI for data analysis can feel like a massive leap forward. But moving too fast without a plan is a classic recipe for frustration and wasted cash. We see teams hit the same roadblocks over and over—predictable issues that kill projects before they even get off the ground.

Once you know what these pitfalls are, you can sidestep them with confidence.

Using a Chatbot for a Data Job

The most common error is picking the wrong tool for the job. Trying to use general-purpose chatbots for serious, repeatable data tasks is like trying to build a house with a Swiss Army knife. It might work for one tiny, one-off task, but it will fail spectacularly at scale.

These tools just aren't designed for the structured, consistent output that real analysis demands. They're built for conversation, not for creating clean, machine-readable datasets.

Inconsistent Prompts and Unreliable Outputs

Another huge mistake is using inconsistent, ad-hoc prompts. If two people on your team ask the same question in slightly different ways, they're going to get wildly different answers from an AI.

This creates chaos when you need reliable data for something important, like lead scoring or market research. The lack of consistency makes the results completely untrustworthy and useless for any real business process.

So, how do you fix it? You need a single source of truth for your instructions.

-

Build a Prompt Library: Create, test, and save standardized prompts for your most common tasks.

-

Use Version Control: Treat your prompts like code. When you improve one, make sure the whole team gets the update.

-

Demand Clarity: Write prompts that are specific and unambiguous. Even better, include a few examples of the exact output format you expect.

This kind of discipline ensures that every time you run a job, you get the same type of output. That's non-negotiable for any workflow that needs to scale.

Relying on AI without clean, well-structured data is like asking a master chef to cook a gourmet meal with spoiled ingredients. The final result will always be disappointing, no matter how skilled the chef is.

Ignoring Data Quality and Model Limits

Way too many teams underestimate the need for clean data. AI models are powerful, but they aren't magic. If you feed them messy, incomplete, or inaccurate data, you'll only get messy, inaccurate insights back.

The "garbage in, garbage out" rule is more important here than ever. Every minute you spend preparing your dataset is an investment that pays off tenfold.

Finally, ignoring the built-in limits of AI models is a surefire way to fail. For instance, all LLMs have context windows—a hard limit on how much information they can process at one time. If you try to analyze a massive spreadsheet by pasting the whole thing in one go, the model will literally forget the beginning of the file by the time it reaches the end.

This is exactly why purpose-built platforms like Row Sherpa exist. They’re designed to sidestep these problems entirely. Instead of one giant, messy request, they apply a single, consistent prompt to each row, one by one. This guarantees every piece of data is analyzed with the same logic, and you never hit a context limit.

That structured, row-by-row approach is what turns the promise of AI for data analysis into a reliable, predictable business asset.

Getting Started with AI-Powered Data Analysis

Here's the good news: using powerful AI for data analysis is no longer just for big companies with massive budgets and teams of engineers. Modern platforms have made this stuff accessible to everyone, letting you turn your most tedious data chores into a strategic, automated asset.

Getting your foot in the door is much simpler than you probably think. You don't need a huge, disruptive project or a complete overhaul of how you work. In fact, the best way to start is small. Pick one manageable task, prove the value, and build momentum from there.

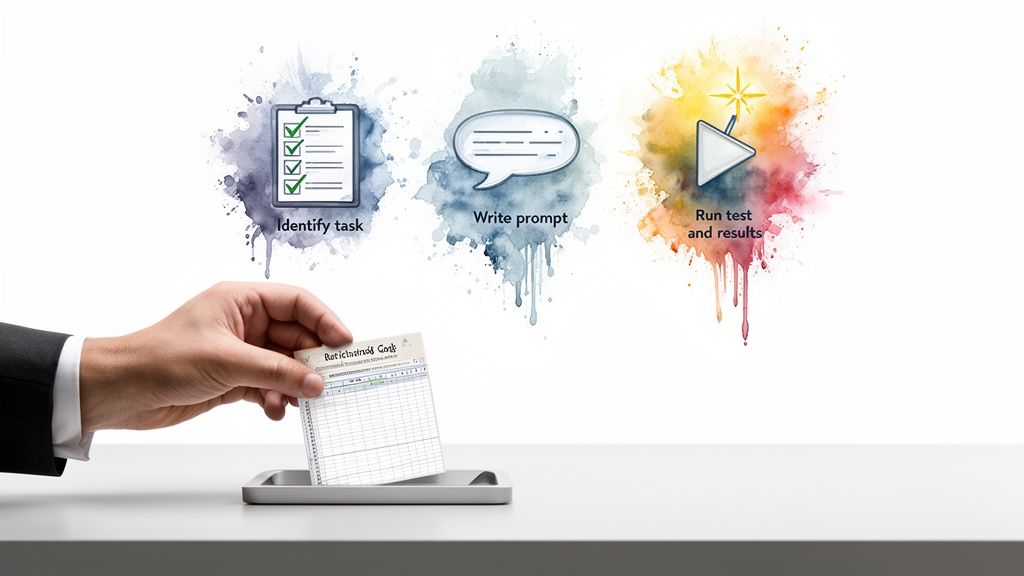

You can nail your first win by following a simple three-step process. It’s designed to get you tangible results fast, with minimal risk.

Your Three-Step Launch Plan

This framework is all about taking action. It walks you from identifying a real business pain point to validating a fix with a small-scale test, setting you up to expand with confidence.

-

Identify One High-Impact Task

Start by finding a single, mind-numbing data task that eats up your team's time. Good candidates are things like categorizing new sales leads, tagging open-ended customer feedback from a survey, or classifying support tickets. The key is to pick a job where doing it manually is a constant struggle for consistency and scale. -

Write a Clear, Concise Prompt

Next, you need to tell the AI exactly what to do. Write a simple, unambiguous prompt that describes the task at hand. It should also specify the output format you want—like a category name or a sentiment score—and include a crystal-clear example. Think of this prompt as your reusable instruction manual for the AI. -

Run a Small Test Job

Finally, take your prompt for a spin using a batch-processing platform like Row Sherpa. Grab a small sample of your data, maybe just 50 or 100 rows from a spreadsheet, upload it, and run the job. This lets you check the results and tweak your prompt without burning through time or money.

The goal here is a quick win. By starting small, you can prove that the AI delivers accurate, consistent results for your specific problem. Once you've seen it work, you can scale up to process thousands of rows with total confidence.

This iterative approach takes all the guesswork out of the process. You get to see the benefits of automated data enrichment firsthand and build a reliable workflow you can count on for your most important data challenges.

Frequently Asked Questions

Got a few last questions before diving in? Here are the most common ones we hear from teams ready to put AI for data analysis to work.

How Is This Different From Traditional BI Tools?

Think of it like this: traditional Business Intelligence (BI) is your rearview mirror. It’s fantastic at showing you what’s already happened, visualizing clean, structured data to answer questions like, "What were our sales last quarter?"

AI for data analysis is the GPS navigation for the road ahead. It’s built to interpret messy, unstructured text and understand nuance. It answers forward-looking questions like, "Which of our new leads are most likely to convert based on their company websites?"

In short, BI reports on known patterns; AI discovers new ones.

Do I Really Need Coding Skills for a No-Code Platform?

Nope, not at all. That’s the entire point.

Platforms like Row Sherpa are built for the people who actually own the data—operations pros, analysts, and business users—not for engineers. If you can write a clear instruction in plain English and handle a spreadsheet, you’ve got all the skills you need.

How Does AI Handle My Messy Spreadsheet Data?

AI is surprisingly good at dealing with imperfect data, but it can't read minds. While it can often figure out what you mean from incomplete sentences or weird formatting, its performance really takes off with clean inputs.

Purpose-built platforms give you an edge here. They apply a single, consistent prompt to each row, one at a time. This simple trick prevents one messy row from confusing the analysis of the next, ensuring every single data point is processed with the same clean logic.

The key is consistency. By applying the same analytical lens to every row, AI systems create reliable, structured outputs even when the source data is a bit chaotic. This turns messy spreadsheets into valuable assets.

How Can I Be Sure the AI's Output Is Trustworthy?

You build trust through two things: validation and repeatability.

First, always run a small test job on a sample of your data and check the results by hand. Once you’ve dialed in your prompt to get the outputs you want, you can save it.

This ensures the exact same analytical model is applied every single time you run that task. The result? Consistent, trustworthy data you can actually build a workflow on.

Ready to stop wrestling with spreadsheets and start getting answers? Row Sherpa lets you upload your data, write a simple prompt, and get back perfectly enriched, structured results in minutes.

Try it for free and see how easy scalable data analysis can be.

Powered by Outrank tool